These tests compare the performance of different methods and point to the ones that are noticeably faster than others. Then take the resulting byte-array and create an ByteArrayInputStream. You probably heard that you can copy data from InputStream to ByteArrayOutputStream. This is a valid constraint, since you can only write to output streams in general. The exact values of requests per second might vary based on OS, hardware, load, and many other terms. The InitiateMultipartUploadRequestneeds to read from an input stream.I must highlight some caveats of the results. However, this can be different in your AWS region. I would choose a 5 or 10-gigabit network to run my application as the increase in speed does not justify the costs.

I would choose a single mechanism from above and use it for all sizes for simplicity. However, the difference in performance is ~ 100ms. However, if the team is not familiar with async programming & AWS S3, then s3PutObject from a file is a good middle ground.įor files that are guaranteed to never exceed 5MB s3putObject is slightly more efficient. For the larger instances, CPU and memory was barely being used, but this was the smallest instance with a 50-gigabit network that was available on AWS ap-southeast-2 (Sydney).įor all use cases of uploading files larger than 100MB, single or multiple,Īsync multipart upload is by far the best approach in terms of efficiency and I would choose that by default. However, a more in-depth cost-benefit analysis needs to be done for real-world use cases as the bigger instances are significantly more expensive. I could upload a 100GB file in less than 7mins. So here I am going from 5 → 10 → 25 → 50 gigabit network. I have chosen EC2 Instances with higher network capacities. With this feature you can create parallel uploads easily. After all parts of your object are uploaded, Amazon S3 then presents the data as a single object. Multipart Upload allows you to upload a single object as a set of parts.

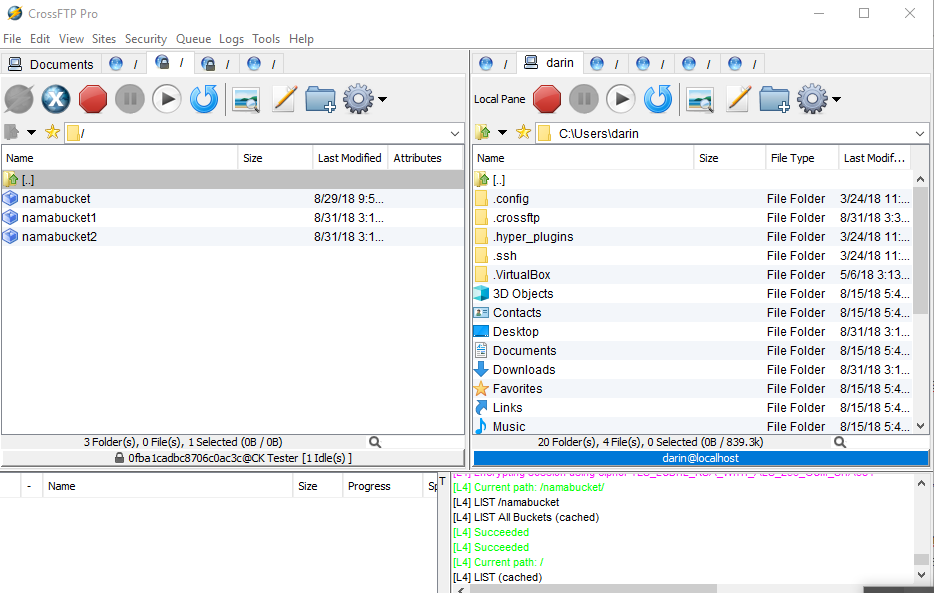

File Sizeīeyond this point, the only way I could improve on the performance for individual uploads was to scale the EC2 instances vertically. Description: AWS Amazon S3 Multipart Uploader allows faster, more flexible uploads into you Amazon S3 bucket. These results are from uploading various sized objects using a t3.medium AWS instance. However, for our comparison, we have a clear winner. On instances with more resources, we could increase the thread pool size and get faster times. With these changes, the total time for data generation and upload drops significantly.

(Service: S3, Status Code: 400, Request ID: T2DZJHWQ69SKWS15, Extended Request ID:īecause of the asynchronous nature of the parts being uploaded, it is possible for the part numbers to be out of order and AWS expects them to be in order. s3.model.S3Exception: The list of parts was not in ascending order. I was getting the following error before I sorted the parts and their corresponding ETag. The complete step has similar changes, and we had to wait for all the parts to be uploaded before actually calling the SDK’s complete multipart method.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed